Signal Theory of Intelligence

for the European Union’s Human Brain Project

5 The dyadic product and its intelligence-generating capacity

5.1 Introduction to the dyadic product

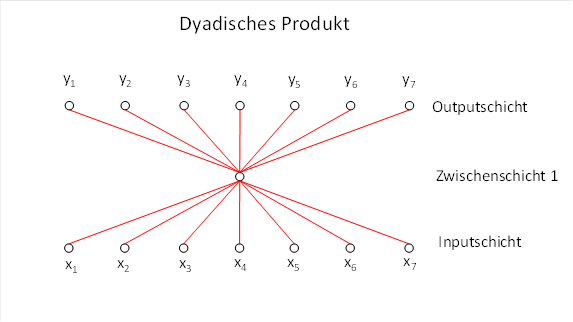

A dyadic product of two vectors, also known as an outer product, can be illustrated by a neural circuit in which an input vector is projected onto a single intermediate neuron, and this intermediate neuron distributes its output across several output neurons.

The associated neural circuit utilises two fundamental properties of neurons:

· Neurons can contact the axons of several input neurons via their dendrites, forming synapses at the points of contact. Each of these input synapses can be assigned a synaptic strength, which can be represented by a number. In AI, these synaptic strengths are also referred to as connection weights.

· A neuron can contact several output neurons via a branched axon and excite them when it is itself excited. Here too, synapses transmit the excitation, and a synaptic strength—i.e. a weight—can also be assigned to each of them.

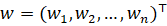

The weights of the input layer can be represented as a weight vector w:

![]() .

.

The weights of the output layer v can also be represented as a weight vector:

![]() .

.

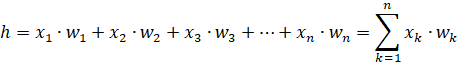

If we denote the excitation of the interneuron by h, this can be represented as the scalar product of the input vector x with the input weight vector w:

The output h of the hidden neuron is now distributed across the m output neurons, whereby the output weights of v come into play:

![]()

![]()

![]()

…

![]()

This relationship between the input vector x and the output vector y can also be represented as the dyadic product of these vectors.

A single hidden neuron (hidden

unit) receives an input vector![]() and generates a scalar

from it

and generates a scalar

from it

![]()

where![]() is the weight vector

of the inputs.

is the weight vector

of the inputs.

This scalar is then projected

onto an output vector![]() , typically linearly via a second

weight vector

, typically linearly via a second

weight vector![]() :

:

![]()

![]()

Thus, the entire mapping![]() is

a linear transformation that can be written as matrix A.

is

a linear transformation that can be written as matrix A.

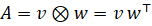

![]() .

.

For the dyadic product (outer

product), a separate symbol, the tensor product symbol ![]() ,

is used to clearly distinguish it from the scalar product:

,

is used to clearly distinguish it from the scalar product:

![]()

Thus, the matrix A, which describes the linear mapping between the input vector and the output vector, can also be written as the dyadic product of the two weight vectors involved:

![]()

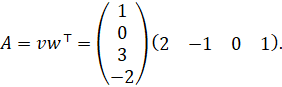

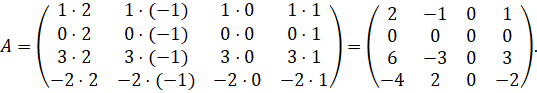

5.2 Example of the dyadic product

We will illustrate the relationships involved in the dyadic product using a concrete example.

- Input vector

- Input weight vector (to the hidden neuron)

- Output weight vector (from the hidden neuron to the output space, also chosen to be 4-dimensional)

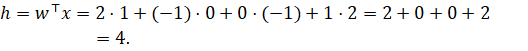

5.2.1 Step 1: Scalar in the hidden neuron

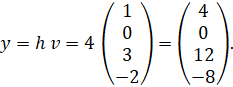

5.2.2 Step 2:

Output vector via

This is the ‘intermediate neuron + output weights’ view.

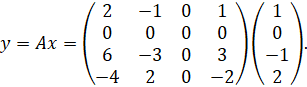

5.2.3 Step 3: Dyadic product as a total matrix

The overall matrix is

Expanded:

Now we apply![]() directly

to

directly

to![]() :

:

Component by component:

- First component:

![]()

- Second component:

![]()

- Third component:

![]()

- Fourth component:

![]()

So

exactly as above regarding the interneuron perspective.

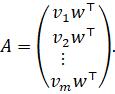

5.3 Why a dyadic product always has rank 1

Consider a matrix of the form

![]()

where![]() and

and![]() are vectors.

are vectors.

5.3.1 All

rows are multiples of

Let us write ![]() . Then

. Then

This means:

- The first row is

- The second row is

- etc.

Each row is therefore a scalar

multiple of the same row![]() .

.

All rows lie in the same

one-dimensional subspace. Thus, the rank of the rows is 1 (provided

that![]() and

and![]() ).

).

5.3.2 All

columns are multiples of

Let us write ![]() . Then

. Then

![]()

This means:

- The first column is

- The second column is

- etc.

Each column is therefore a scalar

multiple of the same column![]() .

.

All columns lie in the same one-dimensional subspace. Hence, the column rank = 1.

Row rank = column rank = 1 ⇒ rank(A) = 1

Since the following holds for every matrix:

![]()

it follows immediately that:

![]()

5.3.3 Summary

A dyadic

product ![]() always has rank 1, because all

rows are multiples of

always has rank 1, because all

rows are multiples of![]() and all columns are multiples

of

and all columns are multiples

of![]() . Thus, both the rows and the

columns span only a one-dimensional subspace.

. Thus, both the rows and the

columns span only a one-dimensional subspace.

5.4 Geometric meaning of a dyadic product

For

![]()

the following holds for every

input vector![]() :

:

![]()

It is therefore immediately clear that:

- The output vector is always

a scalar multiple of

.

. - The direction of the output vector is therefore identical for all inputs.

- Only the scaling factor

depends on the input.

depends on the input.

That is to say:

Geometrically, a dyadic product ![]() maps

every input vector to an output vector that always lies in the same direction

as

maps

every input vector to an output vector that always lies in the same direction

as![]() . Only the length of the

output vector varies depending on the input.

. Only the length of the

output vector varies depending on the input.

Thus, the image space is one-dimensional, and the matrix has rank 1.

5.5 The intelligence-generating capacity of dyadic products

The neural circuit of an intermediate neuron, which receives input from several neurons and itself supplies output to several neurons, is one of the most elementary and at the same time most effective structures in the nervous system. Its particular significance stems from a remarkable ability: it can complete incomplete or noisy input, supplement patterns and generate elementary signals in the output that were not explicitly present in the input at all.

Mathematically, this circuit corresponds to a dyadic product. This generates a linear mapping of rank 1, which projects the input onto a characteristic direction in the output space. It is precisely this property that makes dyadic products fundamental building blocks of biological and artificial intelligence.

We discuss this property using three concrete examples.

5.5.1 Example 1 — Reference case: Complete input

We choose simple, easily understandable numbers.

Input vector

Weight vector (synaptic strengths)

Scalar product

![]()

Divergence weights (output direction)

Reference output

This is our complete, ideal output.

5.5.2 Example 2 — Missing input: Output retains the same pattern

We delete the second component in the input vector x:

Disturbed input

New scalar product

![]()

New output

![]()

So:

Crucially:

![]()

The output is identical in pattern, but globally attenuated.

The interneuron completely supplements the missing component.

5.5.3 Example 3 — Noisy input: Output retains the same pattern

We take a noisy input:

Noisy input

New scalar product

![]()

New output

![]()

So:

Important:

![]()

The output is not noisy, but merely amplified.

The dyadic product suppresses noise and stabilises the pattern.

5.6 What these examples demonstrate

These three examples show:

1. Pattern stability

The pattern of the output always remains identical, regardless of whether the input is missing or noisy.

2. Reconstruction

Missing input components are fully restored.

3. Noise suppression

Noisy inputs result in clean output.

4. Scale invariance

All disturbances act solely as a global correction factor:

![]()

5. Intelligence-generating property

The dyadic product generates output that is not present in the input.

5.7 Theorem on the reconstructive power of the dyadic product

Let![]() be a weight vector and

be a weight vector and![]() a divergence vector. For every

input vector

a divergence vector. For every

input vector![]() , the dyadic product defines

, the dyadic product defines

![]()

an output

vector whose pattern is determined exclusively by![]() .

.

If individual

components are missing from the input or if the input is noisy, the following

applies for every corrupted input![]() :

:

![]()

with a scaling factor

![]() .

.

Thus, the pattern of the output is preserved in full; missing or noisy input components merely result in a global scaling. The dyadic product is therefore one of the few linear operations capable of recognising incomplete or noisy patterns and reconstructing missing components.

5.8 Why the dyadic product is so rare

There are very few mathematical operations that:

- supplement missing information,

- reliably detect noisy patterns,

- generate output that is not contained in the input,

- and do all this linearly.

The dyadic product is one of these extremely rare operations.

However, this intelligence-generating property of dyadic products can be completely lost.

Monograph by Dr Andreas Heinrich Malczan